- COMP.SEC.100

- 21. Hardware Security

- 21.1 The Relationship Between the Design Process and Hardware Security (advanced)

Hardware security covers a broad range of topics, from trusted computing to hardware Trojans. To facilitate structuring, the first subsection below presents a model of hardware abstraction levels. At the same time, concepts that are central to the context of hardware security—roots of trust and threat models—are introduced. The purpose of the following sections is not to cover the entire field, especially since several topics are addressed in other modules (software, operating systems, cryptography). The topics of the last three sections are secure platforms, side-channel and fault attacks, and entropy sources at the lowest abstraction level. CyBOK further discusses the measurement of security, hardware support for software security, register transfers, and finally returns to the hardware design process.

The Relationship Between the Design Process and Hardware Security (advanced)¶

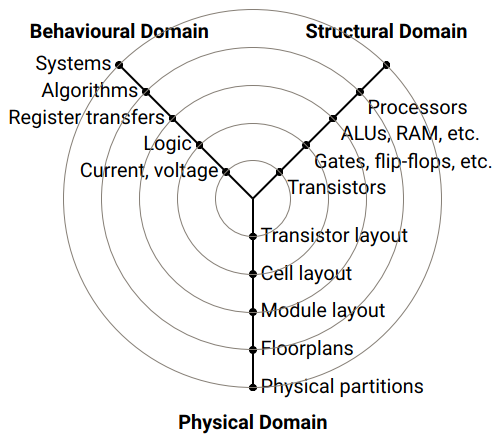

The many seemingly separate topics of hardware security are structured according to hardware design abstraction layers using the Gajski–Kuhn Y-chart, applying it from a hardware security perspective. At the same time, this framework is connected to threat models and the related concept of the root of trust. (Note, the terms layer and level are used interchangeably here, like in CyBOK and HW design literature.)

Hardware Design Abstraction Layers (advanced)¶

Abstraction layers are a central means of managing the complexity of hardware design. As shown in Figure 21.1, the lowest abstraction level considered by the designer consists of individual transistors. At the logic level, basic logic gates such as NAND and NOR are constructed from these, and (among other elements) flip-flops are built from them.

At the register transfer level (RTL), logic gates are combined into larger entities such as registers, arithmetic logic units (ALUs), and other modules. Their operation is synchronized by a clock signal. At higher levels, these modules are combined into processors defined by instruction sets, upon which applications and algorithms can be implemented.

When moving from one abstraction layer to another, the details of the lower level are hidden. In this way, design complexity is reduced at higher levels. These abstraction layers are represented as concentric circles in the Gajski–Kuhn Y-chart.

Figure 21.1 Gajski & Kuhn Y-chart

The Y-chart organizes hardware design along three intersecting axes:

- behavioral axis, describing what the system is intended to do (definitions and specifications)

- structural axis, describing how the functionality is implemented structurally

- physical axis, describing the physical realization at the gate, module, chip, and board levels

The actual design work can be seen as a “walk” through this design space. Design typically begins at the top of the behavioral axis, i.e. with system specifications. These specifications (”what”) are decomposed into components at the same abstraction level (”how”) when moving from the behavioral axis to the structural axis. A structural component at one level becomes a behavioral model at the next lower abstraction level.

Example of a walk through the design space. Assume that a hardware designer is asked to implement a lightweight, low-power security protocol for an Internet of Things (IoT) device. Initially, the designer receives only high-level specifications: the goal of the protocol is to provide confidentiality and integrity (= what) and to use specific cryptographic algorithms (= components) to achieve these goals. The cryptographic algorithms are delivered to the hardware designer as a behavioral description. They may be implemented, for example, as a dedicated co-processor, as program code, or using custom instructions. Depending on costs and production volumes, either CMOS technology or an FPGA is selected as the target platform. This behavioral-level description is translated into a more detailed register transfer level description (for example in VHDL or Verilog). At the RTL level, key design decisions are made: whether the implementation is parallel or sequential, dedicated or programmable, and whether countermeasures against side-channel and fault attacks are included.

The essence of dividing design into abstraction layers lies in the use of appropriate models of component behavior at each level. For example, simulating the performance or energy consumption of an arithmetic unit requires sufficiently accurate models of lower levels, such as the gate level. Similarly, the instruction set architecture (ISA) visible to the programmer serves as a model of the processor and defines how the hardware appears to software development.

Root of Trust (advanced)¶

In the context of information security, a root of trust (also referred to as a trust anchor) is a model used to structure what system security evaluation ultimately relies on. It describes a component upon which security functions depend, even though the trustworthiness of that component cannot be fully verified. A designer uses one or more such components to construct a security function that forms the trusted computing base (TCB). The goal is that it always behaves as expected for the intended purpose. It can therefore be trusted.

For example, for an application developer, a Trusted Platform Module (TPM) or a Subscriber Identity Module (SIM) acts as a root of trust upon which a security application is built. From the perspective of the TPM designer, however, the TPM is not an indivisible entity but a system composed of several smaller components that together provide the security functionality. At even lower hardware abstraction levels, roots of trust include, for example, the secure storage of a secret key in memory or the quality of a true random number generator (TRNG).

Hardware security acts as an enabler for software and system security. For this reason, hardware provides basic security services such as secure storage, isolation, and attestation. Software and systems view hardware as a trusted computing base and assume it behaves correctly. This trust assumption is not self-evident, however. A hardware implementation can violate trust through, for example, hardware Trojans or side-channel attacks, causing secret keys or other sensitive information to leak to an attacker. Therefore, hardware itself also requires protection, and this is not a problem of a single level alone—protection is needed at all abstraction layers. At each layer, a threat model and the associated trust assumptions must be defined. Accordingly, the definition can be refined as follows: “A root of trust is a component at a lower abstraction level upon which the system relies for its security. Its trustworthiness cannot be verified at all, or it is verified at an even lower abstraction level.”

Threat Model (advanced)¶

Each root of trust is associated with a threat model. When using a root of trust, it is assumed that the threat model is not violated. This directly links the threat model to the hardware abstraction layers. If we consider a root of trust at a particular abstraction layer, then all components that constitute this root of trust, are also considered trusted.

Example 1: Secure key storage. Security protocols assume that a secret key is stored securely and is not accessible to the attacker. The root of trust for the protocol is then secure memory that prevents unauthorized reading or modification of the key. For the protocol designer, this secure memory appears as a black box. The task of the hardware designer, however, is to decompose this requirement into a lower abstraction level and address questions such as:

- What type of memory is used?

- Over which buses does the key travel within the system?

- Which other hardware or software components have access to the storage?

- Can the implementation cause side-channel leakage?

Example 2: ISA as a trust boundary. In programmable processors, the traditional model between hardware and software has been the Instruction Set Architecture (ISA). The ISA defines what is visible to the programmer, and its implementation is left to the hardware designer. For a long time, the ISA served as the core trust boundary for software designers. With microarchitectural side-channel attacks such as Spectre, Meltdown, and Foreshadow, however, this assumption no longer holds. The ISA is no longer a black box from the attacker’s perspective, since timing, power, and other microarchitectural leakages may also be exploited. As a result, the attack model shifts from a black-box to a gray-box model, in which parts of the internal behavior of the implementation are observable by the attacker.

Abstraction Layers (advanced)¶

The decomposition and partitioning of design into different abstraction layers, together with Electronic Design Automation (EDA) tools, has been a key reason why the exponential growth predicted by Moore’s law has been possible and sustainable over recent decades. This approach is particularly well suited for optimizing performance, area, energy efficiency, and power consumption. Although hardware security is (and must be)implemented at multiple levels, the systematic decomposition of security work into subcomponents is not as uniform as in performance- or energy-oriented design.

In this module, selected subtopics of hardware security are organized according to hardware design abstraction layers and linked to layer-specific threat models and roots of trust. This also makes it possible to assess the maturity and current state of different subfields. For example, in the context of hardware implementations of cryptographic algorithms, the state of the art is well advanced and effective countermeasures against side-channel attacks exist (see later subsection). In contrast, with respect to the security of general-purpose processors, new vulnerabilities and attack techniques continue to be discovered regularly. Examples include process isolation and secure execution (discussed briefly in the context of operating systems).

Table 21.1 presents a summary of hardware security topics organized by abstraction layers. The first column identifies the abstraction layers from a hardware perspective. The highest level, system and software, is situated above the hardware platform itself.

A system designer typically assumes that a secure platform is available, functioning as a root of trust and providing essential security services. These are described in the second column. The third column presents examples of how such functionality can be implemented. At higher abstraction layers, this may, for example, involve a trusted execution environment or a secure module. The fourth column describes the threat models and attack categories associated with each abstraction layer, and the final column presents typical design activities at that level.

Table 21.1: Design abstraction layers linked to threat models, roots of trust, and design activities

At the processor level, one can distinguish between general-purpose programmable processors and task-specific processors. General-purpose processors must support a wide range of applications, which often implies the presence of software-based vulnerabilities. To mitigate these, hardware-level security mechanisms have been added, such as shadow stacks or protections related to control-flow integrity. Task-specific processors are designed for limited functionality and are often used as co-processors in system-on-chip designs, for example to implement cryptographic algorithms. Time at the processor level is typically measured in instruction cycles.

Both general-purpose and task-specific processors are built from computational units such as multipliers and ALUs, memory, and interconnect structures. These modules are typically described at the register transfer level. At this level, constant-time behavior and resistance to side-channel attacks are central to security. Time is usually measured in clock cycles.

At the logic level, multipliers, ALUs, memories, and interconnect structures are built from gates and flip-flops. The main security concerns are physical side channels, such as leakage through power consumption, electromagnetic radiation, and by exploiting faults. Temporal analysis is performed in absolute time, for example in nanoseconds, and delay values are based on available standard cell libraries or FPGA platforms.

The design of entropy sources, such as true random number generators (TRNGs) or physically unclonable functions (PUFs), requires a deep understanding of transistor behavior and CMOS technology. Accordingly, these fundamental hardware security primitives are placed at the circuit and technology level. Similarly, the design of sensors and countermeasures against physical tampering requires detailed knowledge of the underlying implementation technology. At this level, performance and security are measured in terms of delays (ns) or clock frequencies (GHz).

The purpose of Table 21.1 is not to present a comprehensive list of all hardware security topics, but to illustrate the nature of each abstraction layer through examples.